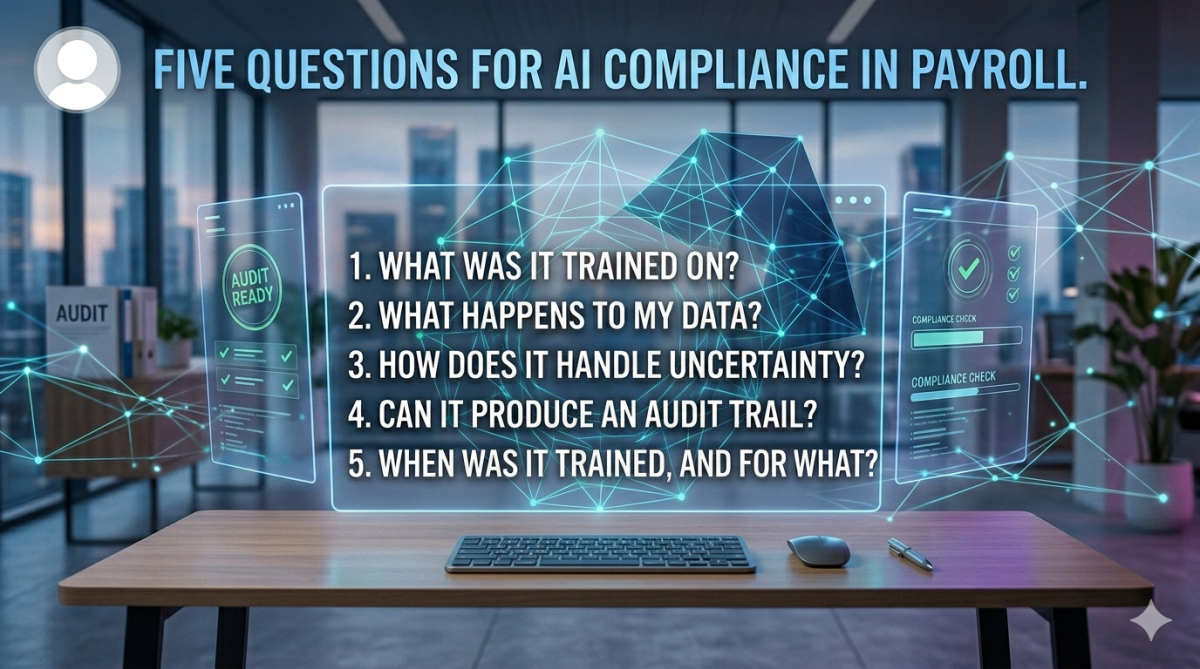

Five questions every payroll professional should ask before trusting AI with compliance work

Imagine a payroll manager posts in an online forum. They've just used a general-purpose AI tool to complete a month-end validation that normally takes three hours. It took two minutes. They're impressed, a little unsettled, and wondering whether others are doing the same.

It's a reasonable reaction. The capability is real. But the post raises a question that nobody in that thread is asking: should a compliance-sensitive process like payroll validation be handed to an AI tool without understanding what that tool actually is?

Payroll is audit-aware by instinct: checking, double-checking, 4-eye checks, 10% checks etc. Payroll professionals document and they ask for evidence. It's time to apply the same discipline to the AI tools being rolled out across our organisations. Here are five questions worth asking before you allow AI into payroll processes.

1. What was it trained on, and whose data was used?

Most general AI tools are trained on large volumes of text and documents from across the internet. Some agentic payroll tools are trained on real payroll data from real organisations. Either way, the question matters: if a model learned from sensitive payroll documents, whose were they, and did those people consent to that use? For compliance teams navigating GDPR and data residency requirements, this is not a theoretical concern.

2. What happens to my data when I use it?

Submitting a gross-to-net report to an AI tool is a data transfer. Where does it go? Who can see it? Is it retained after the session? Is it used to train future versions of the model? Cloud-based AI tools often process data on infrastructure outside your jurisdiction. That matters. "Data never leaves your network" is a procurement-grade claim. "We take data seriously" is not.

3. How does it handle uncertainty and errors?

When an AI tool produces an output, does it tell you how confident it is? Does it flag the cases it is less certain about, or does it present everything with equal confidence? A tool that cannot communicate its own uncertainty is not ready for compliance use, regardless of how impressive the demo looks.

4. Can it produce an audit trail?

If a regulator or auditor asks how a payroll calculation was validated, "the AI checked it" is not a sufficient answer. Can the tool show its working? Can it produce a structured, reviewable output that a human can stand behind? Auditability is not a feature. It is a requirement.

5. What exactly was it trained for, and when?

A general AI tool is trained for everything, which means it is optimised for nothing in particular. Payroll is a narrow, jurisdiction-specific domain. Social security rates change. Tax thresholds change. Statutory leave calculations change A model that was built on data up to a certain date is silently wrong about everything that changed after that date, with no way to flag it. Any AI tool applied to payroll work should be able to tell you precisely what it was built to do, in which jurisdictions, and how current its underlying knowledge is.

Payroll is adopting AI. It should. The efficiency gains are real and the administrative burden is genuine. But adoption without interrogation is how compliance failures happen.

These five questions are a starting point.

29 March 2026

Deployment-Agnostic AI: Why Training and Deployment Matter for Trust in Regulated Industries

This is the training trust problem. Organizations in healthcare, finance, and other regulated sectors cannot simply hand over years of sensitive documents for model training, even with strong encryption and access controls.

This is the inference trust problem. Even if training happens elsewhere, routing documents through a vendor’s infrastructure during operation creates ongoing compliance risk and loss of control.

- Multi-tenant SaaS

Standard enterprise security controls, minimal operational overhead - Private cloud deployment

Running entirely within a customer’s own AWS, Azure, or GCP environment - Dedicated or isolated infrastructure

For air-gapped or ultra-sensitive environments

- deployment environment

- model customization

- Training trust

Traditional: “Trust us with your data while we train.”

Bounded learning: “We don’t need your data.” - Inference trust

Traditional: “Your data must flow through our infrastructure.”

Deployment-agnostic: “Run it where you need.” - Ongoing dependency

Traditional: “We need your data to maintain quality.”

Bounded learning: “Model quality is independent of production data.”

- real training data is scarce due to privacy constraints

- compliance and explainability are essential

- trust and accuracy determine adoption

“Can we trust you with our data?”

to

“Where should this run?”

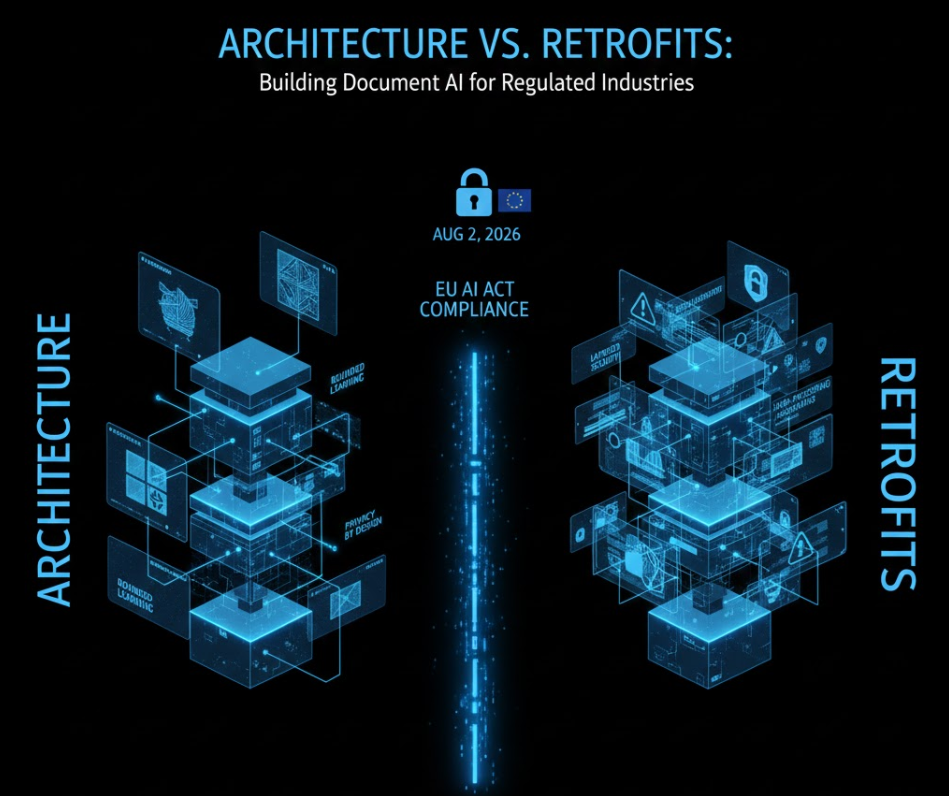

Architecture vs. Retrofits: Building Document AI for Regulated Industries

It’s architecture.

- Privacy risk is eliminated by design

No real personal data is used during training. This removes data-retention concerns, breach exposure during development, and dependency on access to sensitive datasets. - Accuracy improves where it matters

A constrained scope allows the model to perform more reliably on the specific fields and relationships that are relevant to the task, rather than spreading capacity across irrelevant variation. - Regulatory alignment is built in

Applications are designed as professional support and verification tools, with clear task boundaries and human oversight, supporting risk-appropriate classification under the EU AI Act.

- Model training uses only synthetic payslips

- The system supports verification and quality-control workflows

- No automated decisions about individuals are made

- Human oversight is integral to the process

- The tool operates independently of payroll system configuration

- Financial services: statement reconciliation, contract analysis, audit preparation

- Healthcare: document verification, claims support, structured record analysis

- Legal: contract review, due diligence, compliance monitoring

- Insurance: claims comparison, policy analysis, underwriting support

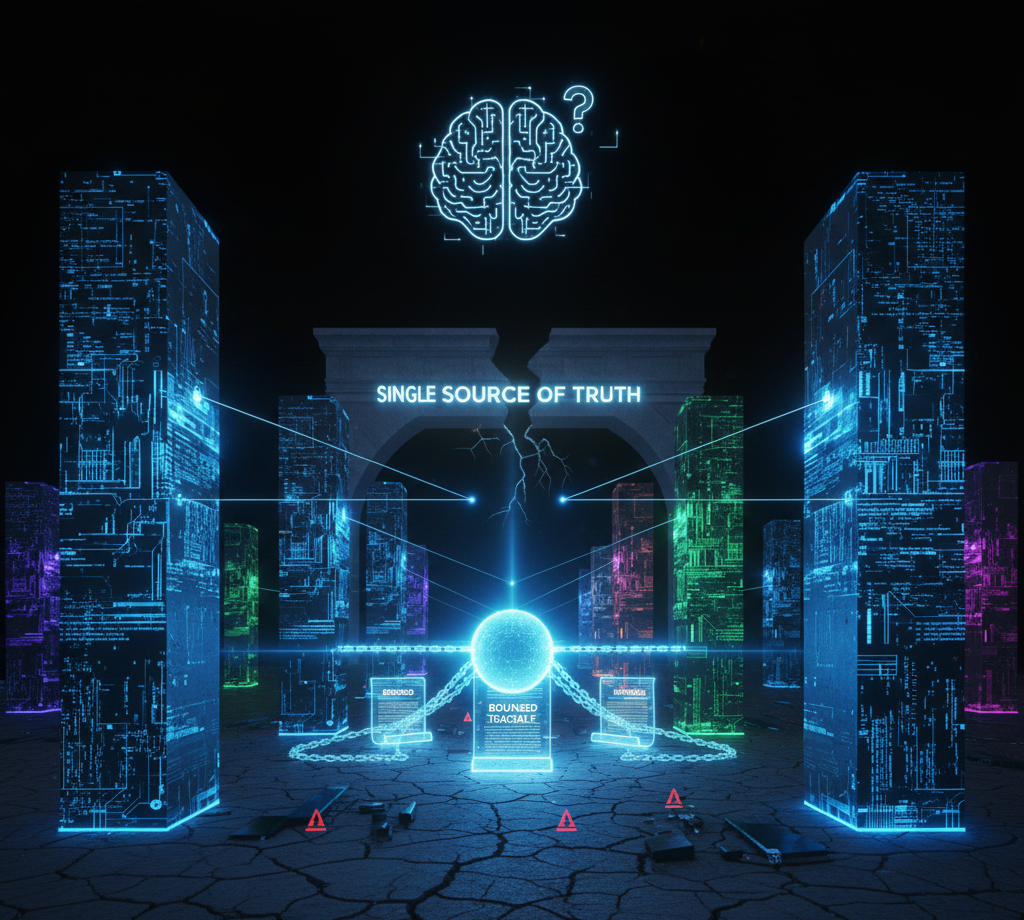

The Myth of the Single Source of Truth

- Bounded: Limited to what the document explicitly states

- Traceable: Every output linked to specific source content

- Defensible: Verifiable through audit trails

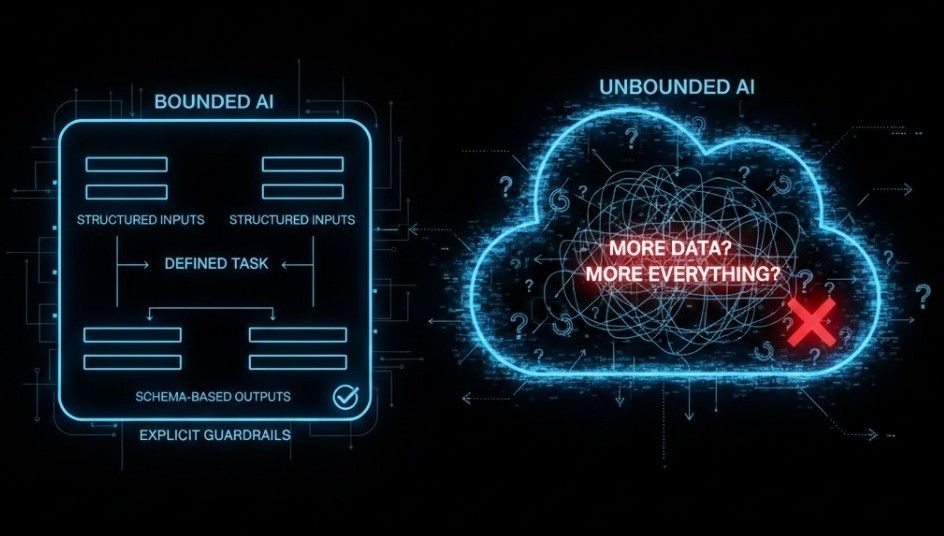

Why We Build Bounded AI

- A clearly defined task

- A clearly defined input structure

- A clearly defined output schema

- Explicit handling of out-of-scope cases